The wait is over and Splunk 9 is officially here! This release introduces a number of features and improvements aimed at making life easier for Splunk admins and users alike. Wondering which announcements and improvements really matter for you? Join us as we investigate and explore some of our favorite discoveries!

Introducing the Ingest Actions UI in Splunk

It’s important in any Splunk deployment to ensure you’re ingesting the data you need, where you need it, when you need it, in the structure you need it in, and all without blowing out your license.

Splunk has long offered various methods by which to hone data, but those methods are far from user friendly. Data admins would be required to know the proper syntax and locations to manually edit configuration files, and — even then — would have no simple method to test their work and ensure its functioning as intended. This could result in the arduous process of having to manually delete buckets of sensitive data as admins literately test new configurations and syntax.

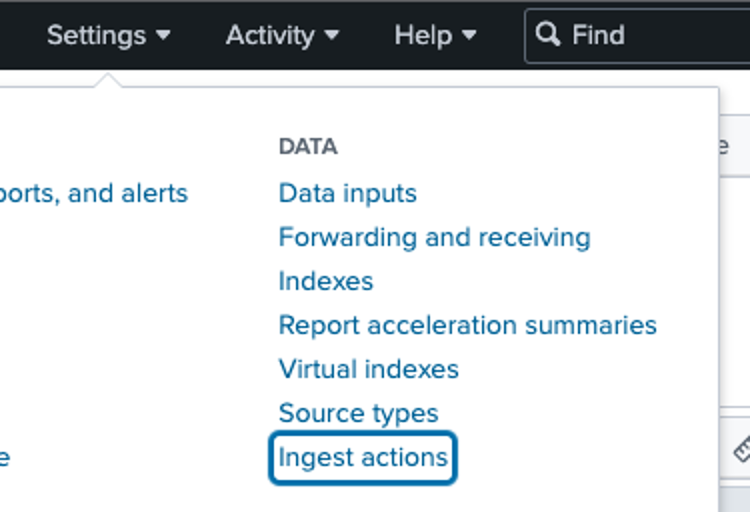

Splunk 9 has finally addressed this sore spot, with the new Ingest Actions user interface, found under Data Settings!

With Ingest Actions, it’s now easy to preview and define data masking, filtering, and even routing!

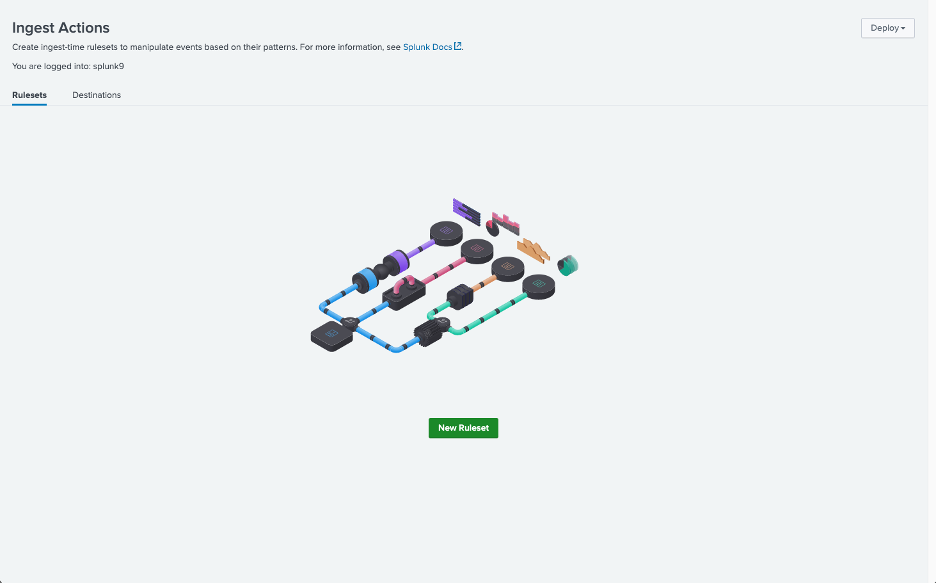

Rulesets

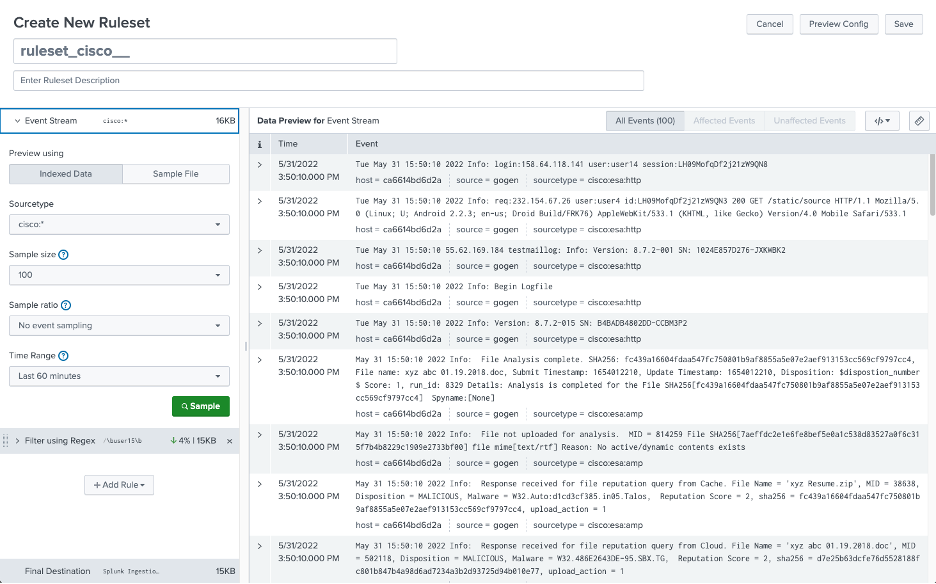

A ruleset consists of an event stream to which any number of rules are applied. The event stream can be either index data, a sample file, or even text from your clipboard.

Once the data has been sampled, rules may be previewed on it before being saved.

When using indexed data, you must select a sourcetype. Only sourcetypes defined in your props.conf file will be listed, but any undefined sourcetype may be added manually.

Additionally, the filter accepts wildcards:

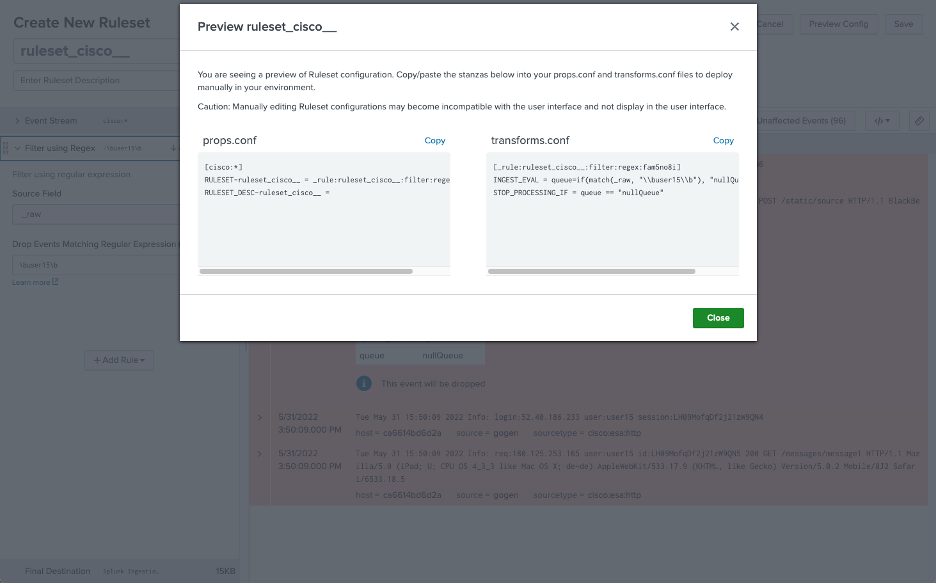

Note, however, that using wildcards will result in invalid syntax for the props.conf stanza title:

Luckily, there is a workaround to make this stanza valid — simply prepend the title with the regular expression:

(?::){0}

For example:

[(?::){0}cisco:*]

Data Masking

Often it may be important to mask certain parts of ingested data such as Social Security Numbers and passwords, for security or compliance reasons. This kind of information should never enter the Splunk environment to begin with, and therefore it should be erased or replaced at index time, rather than at search time.

With the new Ingest Actions interface, creating these rules is straightforward and intuitive. You even have the option to upload sample data for the rule creation, to avoid ever ingesting any sensitive data.

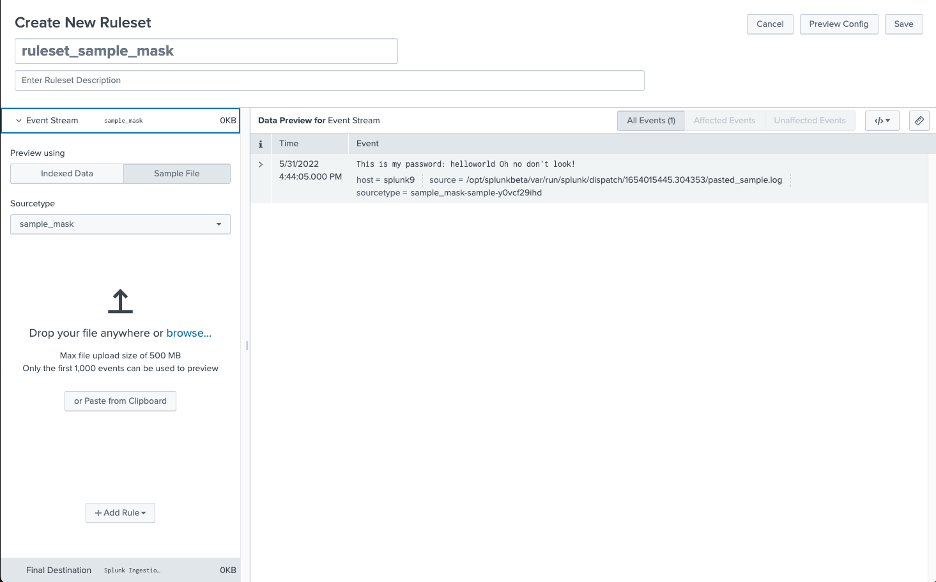

In the screenshot below I’ve used the “Paste from Clipboard” feature to create a single sample event in the same format as my sensitive data:

Next, I click Add Rule, and select Mask with Regular Expression.

Tip: If you would like to know more about writing Regular Expressions for Splunk, see our Beginner’s Guide to Regular Expressions post.

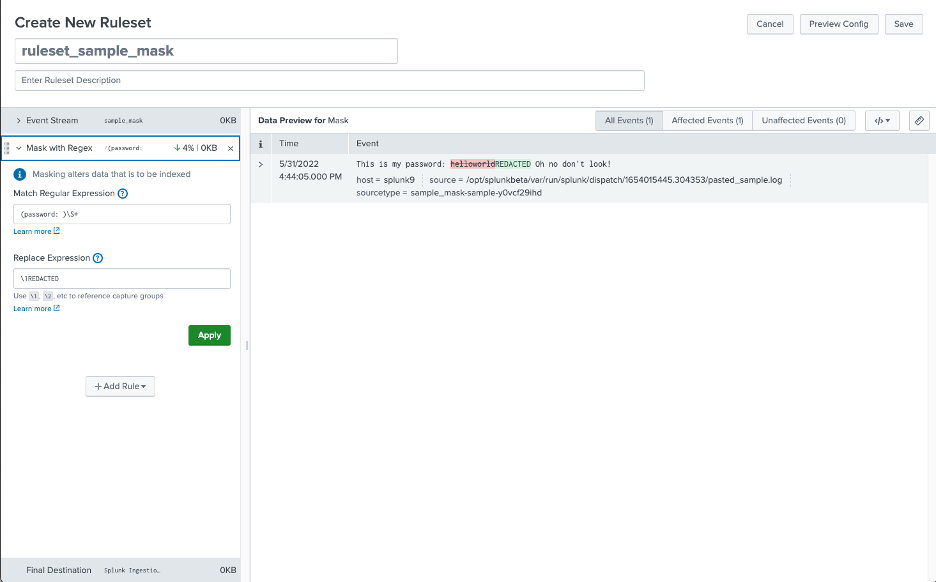

I know my passwords cannot contain spaces, so I use \S+ to match one or more non-whitespace characters. I put the preceeding string within parentheses to create a capture group, which can be referenced in the replacement expression using \1.

Note that the percentage and volume in KB of data masked is displayed in the rule header, as well as that of the total at the bottom.

A more detailed report is available from clicking the ruler icon at the top right.

Data Filtering

You may have a dataset which contains vital logs, but also a significant amount of superfluous spam. The last thing you want is to eat up your license on data which will only serve to slow down searches, complicate SPL day-to-day and increase resource utilization.

Ingest Actions allow for filtering using either regular expressions or eval expressions.

Applying a regular expression filter to _raw, index, sourcetype, source, or host and the event which matches will not be indexed.

An eval expression in this context is the same as a conditional statement you’d use in the first argument of an if function in an eval command. It can use any of the same eval functions such as true() or len(), and should evaluate to true or false. Events for which the expression evaluaties to true will not be indexed.

Data Routing

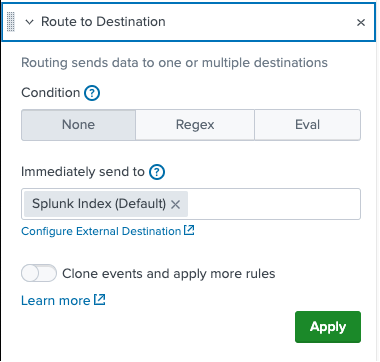

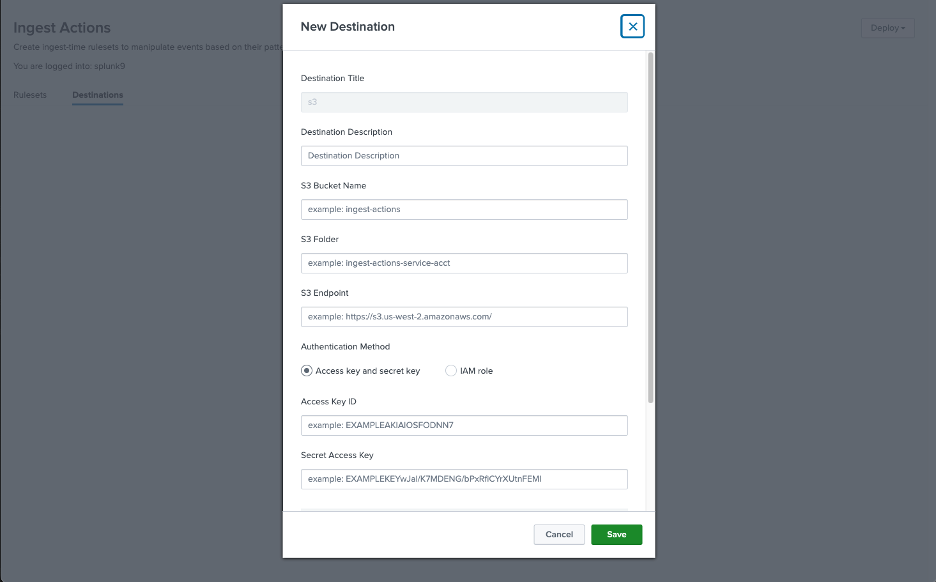

There may be times you wish to filter out certain data, but not drop it entirely. With the data routing ingest action, you can identify data to be routed elsewhere—either to a Splunk index, or to an external S3 compliant destination.

Data may be filtered by a regular expression, an eval expression, or not at all.

If you would like to route the data, but not prevent it from also being normally indexed, toggle the clone event option.

External S3 destinations can be defined once and used any number of times.

Splunk Pro Tip: This type of work can be a considerable resource expense when executing it in-house. The experts at Kinney Group have several years of experience architecting, creating, and solving in Splunk. With Expertise on Demand, you’ll have access to some of the best and brightest minds to walk you through simple and tough problems as they come up.

What’s next?

This is just one of the many incredible new features available in Splunk 9! Need to get up and running with Splunk 9 quickly? Our expertise (nearly 700+ Splunk engagements over the years) coupled with Atlas — The Creator Empowerment Platform for Splunk — means we can make your transition to Splunk 9 quick and effective!

We’d love to hear from you! Check out the Atlas overview video to learn more about empowering your team of Splunk Creators.